Covariance matrix

| Part of a series on Statistics |

| Correlation and covariance |

|---|

Correlation and covariance of random vectors

|

Correlation and covariance of stochastic processes

|

Correlation and covariance of deterministic signals

|

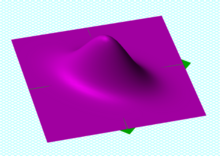

A bivariate Gaussian probability density function centered at (0, 0), with covariance matrix given by [10.50.51]{displaystyle {begin{bmatrix}1&0.5\0.5&1end{bmatrix}}}

Sample points from a bivariate Gaussian distribution with a standard deviation of 3 in roughly the lower left-upper right direction and of 1 in the orthogonal direction. Because the x and y components co-vary, the variances of x{displaystyle x}

and y{displaystyle y}

and y{displaystyle y} do not fully describe the distribution. A 2×2{displaystyle 2times 2}

do not fully describe the distribution. A 2×2{displaystyle 2times 2} covariance matrix is needed; the directions of the arrows correspond to the eigenvectors of this covariance matrix and their lengths to the square roots of the eigenvalues.

covariance matrix is needed; the directions of the arrows correspond to the eigenvectors of this covariance matrix and their lengths to the square roots of the eigenvalues.In probability theory and statistics, a covariance matrix, also known as auto-covariance matrix, dispersion matrix, variance matrix, or variance–covariance matrix, is a matrix whose element in the i, j position is the covariance between the i-th and j-th elements of a random vector. A random vector is a random variable with multiple dimensions. Each element of the vector is a scalar random variable. Each element has either a finite number of observed empirical values or a finite or infinite number of potential values. The potential values are specified by a theoretical joint probability distribution.

Intuitively, the covariance matrix generalizes the notion of variance to multiple dimensions. As an example, the variation in a collection of random points in two-dimensional space cannot be characterized fully by a single number, nor would the variances in the x{displaystyle x}

Because the covariance of the i-th random variable with itself is simply that random variable's variance, each element on the principal diagonal of the covariance matrix is the variance of one of the random variables. Because the covariance of the i-th random variable with the j-th one is the same thing as the covariance of the j-th random variable with the i-th random variable, every covariance matrix is symmetric. Also, every covariance matrix is positive semi-definite.

The auto-covariance matrix of a random vector X{displaystyle mathbf {X} }

Contents

1 Definition

1.1 Generalization of the variance

1.2 Conflicting nomenclatures and notations

2 Properties

2.1 Relation to the correlation matrix

2.2 Relation to the matrix of correlation coefficients

2.3 Inverse of the covariance matrix

2.4 Basic properties

2.5 Block matrices

3 Covariance matrix as a parameter of a distribution

4 Covariance matrix as a linear operator

5 Which matrices are covariance matrices?

6 Complex random vectors

6.1 Covariance matrix

6.2 Pseudo-covariance matrix

6.3 Properties

7 Estimation

8 Applications

9 See also

10 References

11 Further reading

Definition

Throughout this article, boldfaced unsubscripted X{displaystyle mathbf {X} }

If the entries in the column vector

- X=(X1,X2,...,Xn)T{displaystyle mathbf {X} =(X_{1},X_{2},...,X_{n})^{mathrm {T} }}

are random variables, each with finite variance and expected value, then the covariance matrix KXX{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }}

- KXiXj=cov[Xi,Xj]=E[(Xi−E[Xi])(Xj−E[Xj])]{displaystyle operatorname {K} _{X_{i}X_{j}}=operatorname {cov} [X_{i},X_{j}]=operatorname {E} [(X_{i}-operatorname {E} [X_{i}])(X_{j}-operatorname {E} [X_{j}])]}

where the operator E{displaystyle operatorname {E} }

In other words,

- KXX=[E[(X1−E[X1])(X1−E[X1])]E[(X1−E[X1])(X2−E[X2])]⋯E[(X1−E[X1])(Xn−E[Xn])]E[(X2−E[X2])(X1−E[X1])]E[(X2−E[X2])(X2−E[X2])]⋯E[(X2−E[X2])(Xn−E[Xn])]⋮⋮⋱⋮E[(Xn−E[Xn])(X1−E[X1])]E[(Xn−E[Xn])(X2−E[X2])]⋯E[(Xn−E[Xn])(Xn−E[Xn])]]{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }={begin{bmatrix}mathrm {E} [(X_{1}-operatorname {E} [X_{1}])(X_{1}-operatorname {E} [X_{1}])]&mathrm {E} [(X_{1}-operatorname {E} [X_{1}])(X_{2}-operatorname {E} [X_{2}])]&cdots &mathrm {E} [(X_{1}-operatorname {E} [X_{1}])(X_{n}-operatorname {E} [X_{n}])]\\mathrm {E} [(X_{2}-operatorname {E} [X_{2}])(X_{1}-operatorname {E} [X_{1}])]&mathrm {E} [(X_{2}-operatorname {E} [X_{2}])(X_{2}-operatorname {E} [X_{2}])]&cdots &mathrm {E} [(X_{2}-operatorname {E} [X_{2}])(X_{n}-operatorname {E} [X_{n}])]\\vdots &vdots &ddots &vdots \\mathrm {E} [(X_{n}-operatorname {E} [X_{n}])(X_{1}-operatorname {E} [X_{1}])]&mathrm {E} [(X_{n}-operatorname {E} [X_{n}])(X_{2}-operatorname {E} [X_{2}])]&cdots &mathrm {E} [(X_{n}-operatorname {E} [X_{n}])(X_{n}-operatorname {E} [X_{n}])]end{bmatrix}}}

The definition above is equivalent to the matrix equality

KXX=cov[X,X]=E[(X−μX)(X−μX)T]=E[XXT]−μXμXT{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }=operatorname {cov} [mathbf {X} ,mathbf {X} ]=operatorname {E} [(mathbf {X} -mathbf {mu _{X}} )(mathbf {X} -mathbf {mu _{X}} )^{rm {T}}]=operatorname {E} [mathbf {X} mathbf {X} ^{T}]-mathbf {mu _{X}} mathbf {mu _{X}} ^{T}} |

| (Eq.1) |

where μX=E[X]{displaystyle mathbf {mu _{X}} =operatorname {E} [mathbf {X} ]}![{displaystyle mathbf {mu _{X}} =operatorname {E} [mathbf {X} ]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1d8bfb858b1c1ff6d479e0d41710e4e5be1966fa)

Generalization of the variance

This form (Eq.1) can be seen as a generalization of the scalar-valued variance to higher dimensions. Recall that for a scalar-valued random variable X{displaystyle X}

- σX2=var(X)=E[(X−E[X])2]=E[(X−E[X])⋅(X−E[X])].{displaystyle sigma _{X}^{2}=operatorname {var} (X)=operatorname {E} [(X-operatorname {E} [X])^{2}]=operatorname {E} [(X-operatorname {E} [X])cdot (X-operatorname {E} [X])].}

Indeed, the entries on the diagonal of the auto-covariance matrix KXX{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }}

Conflicting nomenclatures and notations

Nomenclatures differ. Some statisticians, following the probabilist William Feller in his two-volume book An Introduction to Probability Theory and Its Applications,[2] call the matrix KXX{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }}

- var(X)=cov(X)=E[(X−E[X])(X−E[X])T].{displaystyle operatorname {var} (mathbf {X} )=operatorname {cov} (mathbf {X} )=operatorname {E} left[(mathbf {X} -operatorname {E} [mathbf {X} ])(mathbf {X} -operatorname {E} [mathbf {X} ])^{rm {T}}right].}

Both forms are quite standard, and there is no ambiguity between them. The matrix KXX{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }}

By comparison, the notation for the cross-covariance matrix between two vectors is

- cov(X,Y)=KXY=E[(X−E[X])(Y−E[Y])T].{displaystyle operatorname {cov} (mathbf {X} ,mathbf {Y} )=operatorname {K} _{mathbf {X} mathbf {Y} }=operatorname {E} left[(mathbf {X} -operatorname {E} [mathbf {X} ])(mathbf {Y} -operatorname {E} [mathbf {Y} ])^{rm {T}}right].}

Properties

Relation to the correlation matrix

The auto-covariance matrix KXX{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }}

- KXX=E[(X−E[X])(X−E[X])T]=RXX−E[X]E[X]T{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }=operatorname {E} [(mathbf {X} -operatorname {E} [mathbf {X} ])(mathbf {X} -operatorname {E} [mathbf {X} ])^{rm {T}}]=operatorname {R} _{mathbf {X} mathbf {X} }-operatorname {E} [mathbf {X} ]operatorname {E} [mathbf {X} ]^{rm {T}}}

where the autocorrelation matrix is defined as RXX=E[XXT]{displaystyle operatorname {R} _{mathbf {X} mathbf {X} }=operatorname {E} [mathbf {X} mathbf {X} ^{rm {T}}]}![{displaystyle operatorname {R} _{mathbf {X} mathbf {X} }=operatorname {E} [mathbf {X} mathbf {X} ^{rm {T}}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/375369663d22bba80d770f6374289f95dd22cf63)

Relation to the matrix of correlation coefficients

An entity closely related to the covariance matrix is the matrix of Pearson product-moment correlation coefficients between each of the random variables in the random vector X{displaystyle mathbf {X} }

- corr(X)=(diag(KXX))−12KXX(diag(KXX))−12,{displaystyle operatorname {corr} (mathbf {X} )={big (}operatorname {diag} (operatorname {K} _{mathbf {X} mathbf {X} }){big )}^{-{frac {1}{2}}},operatorname {K} _{mathbf {X} mathbf {X} },{big (}operatorname {diag} (operatorname {K} _{mathbf {X} mathbf {X} }){big )}^{-{frac {1}{2}}},}

where diag(KXX){displaystyle operatorname {diag} (operatorname {K} _{mathbf {X} mathbf {X} })}

Equivalently, the correlation matrix can be seen as the covariance matrix of the standardized random variables Xi/σ(Xi){displaystyle X_{i}/sigma (X_{i})}

- corr(X)=[1E[(X1−μ1)(X2−μ2)]σ(X1)σ(X2)⋯E[(X1−μ1)(Xn−μn)]σ(X1)σ(Xn)E[(X2−μ2)(X1−μ1)]σ(X2)σ(X1)1⋯E[(X2−μ2)(Xn−μn)]σ(X2)σ(Xn)⋮⋮⋱⋮E[(Xn−μn)(X1−μ1)]σ(Xn)σ(X1)E[(Xn−μn)(X2−μ2)]σ(Xn)σ(X2)⋯1].{displaystyle operatorname {corr} (mathbf {X} )={begin{bmatrix}1&{frac {operatorname {E} [(X_{1}-mu _{1})(X_{2}-mu _{2})]}{sigma (X_{1})sigma (X_{2})}}&cdots &{frac {operatorname {E} [(X_{1}-mu _{1})(X_{n}-mu _{n})]}{sigma (X_{1})sigma (X_{n})}}\\{frac {operatorname {E} [(X_{2}-mu _{2})(X_{1}-mu _{1})]}{sigma (X_{2})sigma (X_{1})}}&1&cdots &{frac {operatorname {E} [(X_{2}-mu _{2})(X_{n}-mu _{n})]}{sigma (X_{2})sigma (X_{n})}}\\vdots &vdots &ddots &vdots \\{frac {operatorname {E} [(X_{n}-mu _{n})(X_{1}-mu _{1})]}{sigma (X_{n})sigma (X_{1})}}&{frac {operatorname {E} [(X_{n}-mu _{n})(X_{2}-mu _{2})]}{sigma (X_{n})sigma (X_{2})}}&cdots &1end{bmatrix}}.}

Each element on the principal diagonal of a correlation matrix is the correlation of a random variable with itself, which always equals 1. Each off-diagonal element is between −1 and +1 inclusive.

Inverse of the covariance matrix

The inverse of this matrix, KXX−1{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }^{-1}}

Basic properties

For KXX=var(X)=E[(X−E[X])(X−E[X])T]{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }=operatorname {var} (mathbf {X} )=operatorname {E} left[left(mathbf {X} -operatorname {E} [mathbf {X} ]right)left(mathbf {X} -operatorname {E} [mathbf {X} ]right)^{rm {T}}right]}![{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }=operatorname {var} (mathbf {X} )=operatorname {E} left[left(mathbf {X} -operatorname {E} [mathbf {X} ]right)left(mathbf {X} -operatorname {E} [mathbf {X} ]right)^{rm {T}}right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/bed55fb51d1aad5b83b37076bdbd9ad0177a813b)

- KXX=E(XXT)−μXμXT{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }=operatorname {E} (mathbf {XX^{rm {T}}} )-mathbf {mu _{X}} mathbf {mu _{X}} ^{rm {T}}}

KXX{displaystyle operatorname {K} _{mathbf {X} mathbf {X} },}is positive-semidefinite, i.e. aTΣa≥0for all a∈Rn{displaystyle mathbf {a} ^{T}Sigma mathbf {a} geq 0quad {text{for all }}mathbf {a} in mathbb {R} ^{n}}

KXX{displaystyle operatorname {K} _{mathbf {X} mathbf {X} },}is symmetric, i.e. KXXT=KXX{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }^{rm {T}}=operatorname {K} _{mathbf {X} mathbf {X} }}

- For any constant (i.e. non-random) m×n{displaystyle mtimes n}

matrix A{displaystyle mathbf {A} }

and constant m×1{displaystyle mtimes 1}

vector a{displaystyle mathbf {a} }

, one has var(AX+a)=Avar(X)AT{displaystyle operatorname {var} (mathbf {AX} +mathbf {a} )=mathbf {A} ,operatorname {var} (mathbf {X} ),mathbf {A} ^{rm {T}}}

- If Y{displaystyle mathbf {Y} }

is another random vector with the same dimension as X{displaystyle mathbf {X} }

, then var(X+Y)=var(X)+cov(X,Y)+cov(Y,X)+var(Y){displaystyle operatorname {var} (mathbf {X} +mathbf {Y} )=operatorname {var} (mathbf {X} )+operatorname {cov} (mathbf {X} ,mathbf {Y} )+operatorname {cov} (mathbf {Y} ,mathbf {X} )+operatorname {var} (mathbf {Y} )}

where cov(X,Y){displaystyle operatorname {cov} (mathbf {X} ,mathbf {Y} )}

is the cross-covariance matrix of X{displaystyle mathbf {X} }

and Y{displaystyle mathbf {Y} }

.

Block matrices

The joint mean μX,Y{displaystyle mathbf {mu } _{X,Y}}

- μX,Y=[μXμY],ΣX,Y=[ΣXXΣXYΣYXΣYY]{displaystyle {boldsymbol {mu }}_{X,Y}={begin{bmatrix}{boldsymbol {mu }}_{X}\{boldsymbol {mu }}_{Y}end{bmatrix}},qquad {boldsymbol {Sigma }}_{X,Y}={begin{bmatrix}{boldsymbol {Sigma }}_{mathit {XX}}&{boldsymbol {Sigma }}_{mathit {XY}}\{boldsymbol {Sigma }}_{mathit {YX}}&{boldsymbol {Sigma }}_{mathit {YY}}end{bmatrix}}}

where ΣXX=var(X),ΣYY=var(Y),{displaystyle {boldsymbol {Sigma }}_{XX}=operatorname {var} ({boldsymbol {X}}),{boldsymbol {Sigma }}_{YY}=operatorname {var} ({boldsymbol {Y}}),}

ΣXX{displaystyle {boldsymbol {Sigma }}_{XX}}

If X{displaystyle {boldsymbol {X}}}

- X,Y∼ N(μX,Y,ΣX,Y),{displaystyle {boldsymbol {X}},{boldsymbol {Y}}sim {mathcal {N}}({boldsymbol {mu }}_{X,Y},{boldsymbol {Sigma }}_{X,Y}),}

then the conditional distribution for Y{displaystyle {boldsymbol {Y}}}

Y∣X∼ N(μY|X,ΣY∣X),{displaystyle {boldsymbol {Y}}mid {boldsymbol {X}}sim {mathcal {N}}({boldsymbol {mu }}_{Y|X},{boldsymbol {Sigma }}_{Ymid X}),}[5]

defined by conditional mean

- μY∣X=μY+ΣYXΣXX−1(x−μX){displaystyle {boldsymbol {mu }}_{Ymid X}={boldsymbol {mu }}_{Y}+{boldsymbol {Sigma }}_{YX}{boldsymbol {Sigma }}_{XX}^{-1}left(mathbf {x} -{boldsymbol {mu }}_{X}right)}

and conditional variance

- ΣY∣X=ΣYY−ΣYXΣXX−1ΣXY.{displaystyle {boldsymbol {Sigma }}_{Ymid X}={boldsymbol {Sigma }}_{YY}-{boldsymbol {Sigma }}_{mathit {YX}}{boldsymbol {Sigma }}_{mathit {XX}}^{-1}{boldsymbol {Sigma }}_{mathit {XY}}.}

The matrix ΣYXΣXX−1{displaystyle {boldsymbol {Sigma }}_{YX}{boldsymbol {Sigma }}_{XX}^{-1}}

The matrix of regression coefficients may often be given in transpose form, ΣXX−1ΣXY{displaystyle {boldsymbol {Sigma }}_{XX}^{-1}{boldsymbol {Sigma }}_{XY}}

Covariance matrix as a parameter of a distribution

If a vector of n possibly correlated random variables is jointly normally distributed, or more generally elliptically distributed, then its probability density function can be expressed in terms of the covariance matrix.[6]

Covariance matrix as a linear operator

Applied to one vector, the covariance matrix maps a linear combination c of the random variables X onto a vector of covariances with those variables: cTΣ=cov(cTX,X){displaystyle mathbf {c} ^{rm {T}}Sigma =operatorname {cov} (mathbf {c} ^{rm {T}}mathbf {X} ,mathbf {X} )}

Similarly, the (pseudo-)inverse covariance matrix provides an inner product ⟨c−μ|Σ+|c−μ⟩{displaystyle langle c-mu |Sigma ^{+}|c-mu rangle }

Which matrices are covariance matrices?

From the identity just above, let b{displaystyle mathbf {b} }

- var(bTX)=bTvar(X)b,{displaystyle operatorname {var} (mathbf {b} ^{rm {T}}mathbf {X} )=mathbf {b} ^{rm {T}}operatorname {var} (mathbf {X} )mathbf {b} ,,}

which must always be nonnegative, since it is the variance of a real-valued random variable. A covariance matrix is always a positive-semidefinite matrix, since

- wTE[(X−E[X])(X−E[X])T]w=E[wT(X−E[X])(X−E[X])Tw]=E[(wT(X−E[X]))2]≥0since wT(X−E[X]) is a scalar.{displaystyle {begin{aligned}&w^{rm {T}}operatorname {E} left[(mathbf {X} -operatorname {E} [mathbf {X} ])(mathbf {X} -operatorname {E} [mathbf {X} ])^{rm {T}}right]w=operatorname {E} left[w^{rm {T}}(mathbf {X} -operatorname {E} [mathbf {X} ])(mathbf {X} -operatorname {E} [mathbf {X} ])^{rm {T}}wright]\[5pt]={}&operatorname {E} {big [}{big (}w^{rm {T}}(mathbf {X} -operatorname {E} [mathbf {X} ]){big )}^{2}{big ]}geq 0quad {text{since }}w^{rm {T}}(mathbf {X} -operatorname {E} [mathbf {X} ]){text{ is a scalar}}.end{aligned}}}

Conversely, every symmetric positive semi-definite matrix is a covariance matrix. To see this, suppose M{displaystyle M}

- var(M1/2X)=M1/2var(X)M1/2=M.{displaystyle operatorname {var} (mathbf {M} ^{1/2}mathbf {X} )=mathbf {M} ^{1/2},operatorname {var} (mathbf {X} ),mathbf {M} ^{1/2}=mathbf {M} .}

Complex random vectors

Covariance matrix

The variance of a complex scalar-valued random variable with expected value μ{displaystyle mu }

- var(Z)=E[(Z−μZ)(Z−μZ)¯],{displaystyle operatorname {var} (Z)=operatorname {E} left[(Z-mu _{Z}){overline {(Z-mu _{Z})}}right],}

where the complex conjugate of a complex number z{displaystyle z}

If Z=(Z1,…,Zn)T{displaystyle mathbf {Z} =(Z_{1},ldots ,Z_{n})^{mathrm {T} }}

KZZ=cov[Z,Z]=E[(Z−μZ)(Z−μZ)H]{displaystyle operatorname {K} _{mathbf {Z} mathbf {Z} }=operatorname {cov} [mathbf {Z} ,mathbf {Z} ]=operatorname {E} left[(mathbf {Z} -mathbf {mu _{Z}} )(mathbf {Z} -mathbf {mu _{Z}} )^{mathrm {H} }right]},

where H{displaystyle {}^{mathrm {H} }}

Pseudo-covariance matrix

For complex random vectors, another kind of second central moment, the pseudo-covariance matrix (also called relation matrix) is defined as follows. In contrast to the covariance matrix defined above Hermitian transposition gets replaced by transposition in the definition.

- JZZ=cov[Z,Z¯]=E[(Z−μZ)(Z−μZ)T]{displaystyle operatorname {J} _{mathbf {Z} mathbf {Z} }=operatorname {cov} [mathbf {Z} ,{overline {mathbf {Z} }}]=operatorname {E} left[(mathbf {Z} -mathbf {mu _{Z}} )(mathbf {Z} -mathbf {mu _{Z}} )^{mathrm {T} }right]}

Properties

- The covariance matrix is a Hermitian matrix, i.e. KZZH=KZZ{displaystyle operatorname {K} _{mathbf {Z} mathbf {Z} }^{mathrm {H} }=operatorname {K} _{mathbf {Z} mathbf {Z} }}

.[1]:p. 179

- The diagonal elements of the covariance matrix are real.[1]:p. 179

Estimation

If MX{displaystyle mathbf {M} _{mathbf {X} }}

- QX=1n−1MXTMX,QXY=1n−1MXTMY{displaystyle mathbf {Q} _{mathbf {X} }={frac {1}{n-1}}mathbf {M} _{mathbf {X} }^{rm {T}}mathbf {M} _{mathbf {X} },qquad mathbf {Q} _{mathbf {XY} }={frac {1}{n-1}}mathbf {M} _{mathbf {X} }^{rm {T}}mathbf {M} _{mathbf {Y} }}

or, if the column means were known a priori,

- QX=1nMXTMX,QXY=1nMXTMY.{displaystyle mathbf {Q} _{mathbf {X} }={frac {1}{n}}mathbf {M} _{mathbf {X} }^{rm {T}}mathbf {M} _{mathbf {X} },qquad mathbf {Q} _{mathbf {XY} }={frac {1}{n}}mathbf {M} _{mathbf {X} }^{rm {T}}mathbf {M} _{mathbf {Y} }.}

These empirical sample correlation matrices are the most straightforward and most often used estimators for the correlation matrices, but other estimators also exist, including regularised or shrinkage estimators, which may have better properties.

Applications

The covariance matrix is a useful tool in many different areas. From it a transformation matrix can be derived, called a whitening transformation, that allows one to completely decorrelate the data[citation needed] or, from a different point of view, to find an optimal basis for representing the data in a compact way[citation needed] (see Rayleigh quotient for a formal proof and additional properties of covariance matrices).

This is called principal component analysis (PCA) and the Karhunen–Loève transform (KL-transform).

The covariance matrix plays a key role in financial economics, especially in portfolio theory and its mutual fund separation theorem and in the capital asset pricing model. The matrix of covariances among various assets' returns is used to determine, under certain assumptions, the relative amounts of different assets that investors should (in a normative analysis) or are predicted to (in a positive analysis) choose to hold in a context of diversification.

See also

- Multivariate statistics

- Gramian matrix

- Eigenvalue decomposition

- Quadratic form (statistics)

- Principal components

References

^ abc Park,Kun Il (2018). Fundamentals of Probability and Stochastic Processes with Applications to Communications. Springer. ISBN 978-3-319-68074-3..mw-parser-output cite.citation{font-style:inherit}.mw-parser-output .citation q{quotes:"""""""'""'"}.mw-parser-output .citation .cs1-lock-free a{background:url("//upload.wikimedia.org/wikipedia/commons/thumb/6/65/Lock-green.svg/9px-Lock-green.svg.png")no-repeat;background-position:right .1em center}.mw-parser-output .citation .cs1-lock-limited a,.mw-parser-output .citation .cs1-lock-registration a{background:url("//upload.wikimedia.org/wikipedia/commons/thumb/d/d6/Lock-gray-alt-2.svg/9px-Lock-gray-alt-2.svg.png")no-repeat;background-position:right .1em center}.mw-parser-output .citation .cs1-lock-subscription a{background:url("//upload.wikimedia.org/wikipedia/commons/thumb/a/aa/Lock-red-alt-2.svg/9px-Lock-red-alt-2.svg.png")no-repeat;background-position:right .1em center}.mw-parser-output .cs1-subscription,.mw-parser-output .cs1-registration{color:#555}.mw-parser-output .cs1-subscription span,.mw-parser-output .cs1-registration span{border-bottom:1px dotted;cursor:help}.mw-parser-output .cs1-ws-icon a{background:url("//upload.wikimedia.org/wikipedia/commons/thumb/4/4c/Wikisource-logo.svg/12px-Wikisource-logo.svg.png")no-repeat;background-position:right .1em center}.mw-parser-output code.cs1-code{color:inherit;background:inherit;border:inherit;padding:inherit}.mw-parser-output .cs1-hidden-error{display:none;font-size:100%}.mw-parser-output .cs1-visible-error{font-size:100%}.mw-parser-output .cs1-maint{display:none;color:#33aa33;margin-left:0.3em}.mw-parser-output .cs1-subscription,.mw-parser-output .cs1-registration,.mw-parser-output .cs1-format{font-size:95%}.mw-parser-output .cs1-kern-left,.mw-parser-output .cs1-kern-wl-left{padding-left:0.2em}.mw-parser-output .cs1-kern-right,.mw-parser-output .cs1-kern-wl-right{padding-right:0.2em}

^ William Feller (1971). An introduction to probability theory and its applications. Wiley. ISBN 978-0-471-25709-7. Retrieved 10 August 2012.

^ Wasserman, Larry (2004). All of Statistics: A Concise Course in Statistical Inference. ISBN 0-387-40272-1.

^ Taboga, Marco (2010). "Lectures on probability theory and mathematical statistics".

^ Eaton, Morris L. (1983). Multivariate Statistics: a Vector Space Approach. John Wiley and Sons. pp. 116–117. ISBN 0-471-02776-6.

^ Frahm, G.; Junker, M.; Szimayer, A. (2003). "Elliptical copulas: Applicability and limitations". Statistics & Probability Letters. 63 (3): 275–286. doi:10.1016/S0167-7152(03)00092-0.

^ Lapidoth, Amos (2009). A Foundation in Digital Communication. Cambridge University Press. ISBN 978-0-521-19395-5.

^ Brookes, Mike. "The Matrix Reference Manual".

Further reading

Hazewinkel, Michiel, ed. (2001) [1994], "Covariance matrix", Encyclopedia of Mathematics, Springer Science+Business Media B.V. / Kluwer Academic Publishers, ISBN 978-1-55608-010-4

- Weisstein, Eric W. "Covariance Matrix". MathWorld.

van Kampen, N. G. (1981). Stochastic processes in physics and chemistry. New York: North-Holland. ISBN 0-444-86200-5.

![{displaystyle operatorname {K} _{X_{i}X_{j}}=operatorname {cov} [X_{i},X_{j}]=operatorname {E} [(X_{i}-operatorname {E} [X_{i}])(X_{j}-operatorname {E} [X_{j}])]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/83bec85f5e2cab5d3406677dd806e554a442331f)

![{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }={begin{bmatrix}mathrm {E} [(X_{1}-operatorname {E} [X_{1}])(X_{1}-operatorname {E} [X_{1}])]&mathrm {E} [(X_{1}-operatorname {E} [X_{1}])(X_{2}-operatorname {E} [X_{2}])]&cdots &mathrm {E} [(X_{1}-operatorname {E} [X_{1}])(X_{n}-operatorname {E} [X_{n}])]\\mathrm {E} [(X_{2}-operatorname {E} [X_{2}])(X_{1}-operatorname {E} [X_{1}])]&mathrm {E} [(X_{2}-operatorname {E} [X_{2}])(X_{2}-operatorname {E} [X_{2}])]&cdots &mathrm {E} [(X_{2}-operatorname {E} [X_{2}])(X_{n}-operatorname {E} [X_{n}])]\\vdots &vdots &ddots &vdots \\mathrm {E} [(X_{n}-operatorname {E} [X_{n}])(X_{1}-operatorname {E} [X_{1}])]&mathrm {E} [(X_{n}-operatorname {E} [X_{n}])(X_{2}-operatorname {E} [X_{2}])]&cdots &mathrm {E} [(X_{n}-operatorname {E} [X_{n}])(X_{n}-operatorname {E} [X_{n}])]end{bmatrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/595ae6dc8ee7f0708dbf854a48a8c6bfad7ff8ce)

![{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }=operatorname {cov} [mathbf {X} ,mathbf {X} ]=operatorname {E} [(mathbf {X} -mathbf {mu _{X}} )(mathbf {X} -mathbf {mu _{X}} )^{rm {T}}]=operatorname {E} [mathbf {X} mathbf {X} ^{T}]-mathbf {mu _{X}} mathbf {mu _{X}} ^{T}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2dfbcd40b5e71238b0d3df4fd313ee4c8d5ce98a)

![{displaystyle sigma _{X}^{2}=operatorname {var} (X)=operatorname {E} [(X-operatorname {E} [X])^{2}]=operatorname {E} [(X-operatorname {E} [X])cdot (X-operatorname {E} [X])].}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d7298e1f1861406afedda8733e2950b94656c549)

![{displaystyle operatorname {var} (mathbf {X} )=operatorname {cov} (mathbf {X} )=operatorname {E} left[(mathbf {X} -operatorname {E} [mathbf {X} ])(mathbf {X} -operatorname {E} [mathbf {X} ])^{rm {T}}right].}](https://wikimedia.org/api/rest_v1/media/math/render/svg/cca6689e7e3aad726a5b60052bbd8f704f1b26bf)

![{displaystyle operatorname {cov} (mathbf {X} ,mathbf {Y} )=operatorname {K} _{mathbf {X} mathbf {Y} }=operatorname {E} left[(mathbf {X} -operatorname {E} [mathbf {X} ])(mathbf {Y} -operatorname {E} [mathbf {Y} ])^{rm {T}}right].}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1112b836c2cd9fde4ac076a44dfdbd213395a56b)

![{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }=operatorname {E} [(mathbf {X} -operatorname {E} [mathbf {X} ])(mathbf {X} -operatorname {E} [mathbf {X} ])^{rm {T}}]=operatorname {R} _{mathbf {X} mathbf {X} }-operatorname {E} [mathbf {X} ]operatorname {E} [mathbf {X} ]^{rm {T}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/00175de2c055b834a6f012910f7a5a3d1ed96353)

![{displaystyle operatorname {corr} (mathbf {X} )={begin{bmatrix}1&{frac {operatorname {E} [(X_{1}-mu _{1})(X_{2}-mu _{2})]}{sigma (X_{1})sigma (X_{2})}}&cdots &{frac {operatorname {E} [(X_{1}-mu _{1})(X_{n}-mu _{n})]}{sigma (X_{1})sigma (X_{n})}}\\{frac {operatorname {E} [(X_{2}-mu _{2})(X_{1}-mu _{1})]}{sigma (X_{2})sigma (X_{1})}}&1&cdots &{frac {operatorname {E} [(X_{2}-mu _{2})(X_{n}-mu _{n})]}{sigma (X_{2})sigma (X_{n})}}\\vdots &vdots &ddots &vdots \\{frac {operatorname {E} [(X_{n}-mu _{n})(X_{1}-mu _{1})]}{sigma (X_{n})sigma (X_{1})}}&{frac {operatorname {E} [(X_{n}-mu _{n})(X_{2}-mu _{2})]}{sigma (X_{n})sigma (X_{2})}}&cdots &1end{bmatrix}}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/df091a047aa8a9d829b25f68a5bbe6d56938b146)

![{displaystyle {begin{aligned}&w^{rm {T}}operatorname {E} left[(mathbf {X} -operatorname {E} [mathbf {X} ])(mathbf {X} -operatorname {E} [mathbf {X} ])^{rm {T}}right]w=operatorname {E} left[w^{rm {T}}(mathbf {X} -operatorname {E} [mathbf {X} ])(mathbf {X} -operatorname {E} [mathbf {X} ])^{rm {T}}wright]\[5pt]={}&operatorname {E} {big [}{big (}w^{rm {T}}(mathbf {X} -operatorname {E} [mathbf {X} ]){big )}^{2}{big ]}geq 0quad {text{since }}w^{rm {T}}(mathbf {X} -operatorname {E} [mathbf {X} ]){text{ is a scalar}}.end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f3473dcc676ecd0d74db28a005f6656a957c33c4)

![{displaystyle operatorname {var} (Z)=operatorname {E} left[(Z-mu _{Z}){overline {(Z-mu _{Z})}}right],}](https://wikimedia.org/api/rest_v1/media/math/render/svg/b3a3d7abfa56fdb689ebd3c01388715ad4773d4a)

![{displaystyle operatorname {J} _{mathbf {Z} mathbf {Z} }=operatorname {cov} [mathbf {Z} ,{overline {mathbf {Z} }}]=operatorname {E} left[(mathbf {Z} -mathbf {mu _{Z}} )(mathbf {Z} -mathbf {mu _{Z}} )^{mathrm {T} }right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/bba62bd04d95107abdaa72eb5b505496ad4151ea)